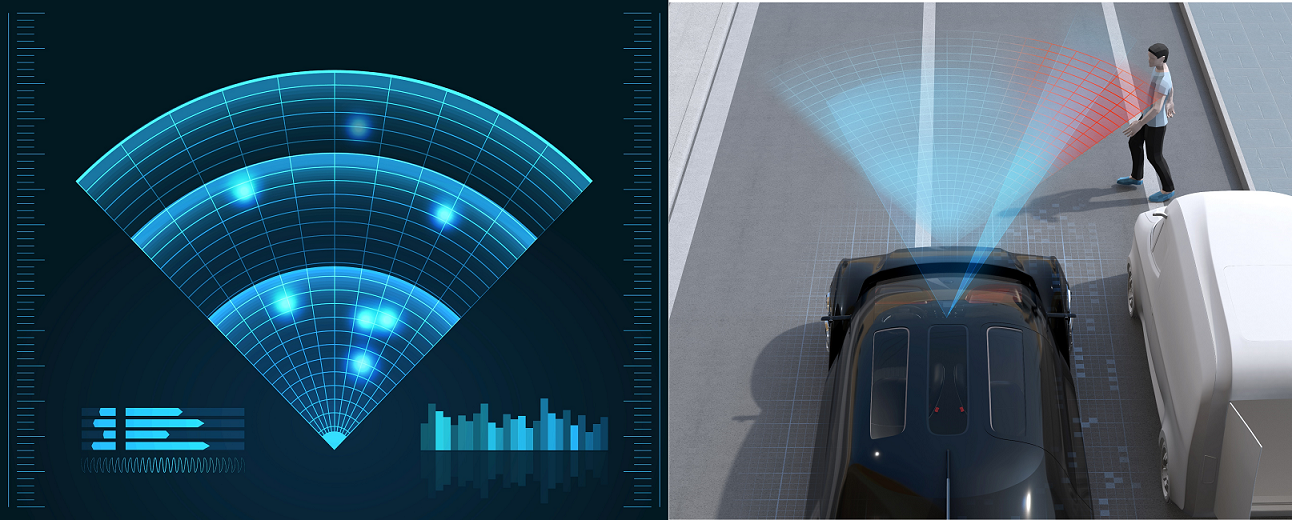

In recent years, imaging radar has rapidly gained attention in the fields of autonomous driving, robotics, and industrial safety. While conventional radar only detects the presence or absence of an object, imaging radar is a next-generation technology that can visualize the shape and distribution of objects with high precision as a point cloud. It has recognition capabilities comparable to cameras and LiDAR, while also incorporating radar's unique weather resistance and speed estimation capabilities. In this article, we will explain why imaging radar is currently attracting attention, how point cloud generation works, and the current technical challenges.

The Visualized World of Sensor Technology: The Position of Imaging Radar

Sensor technology that can "visualize" environmental information with high precision is essential in fields such as autonomous driving, robotics, and smart infrastructure. Currently, three types of sensors - cameras, LiDAR, and radar - are responsible for environmental recognition, each using different physical principles and wavelength bands. Understanding the characteristics and limitations of each sensor makes the technological advantages of imaging radar clear.

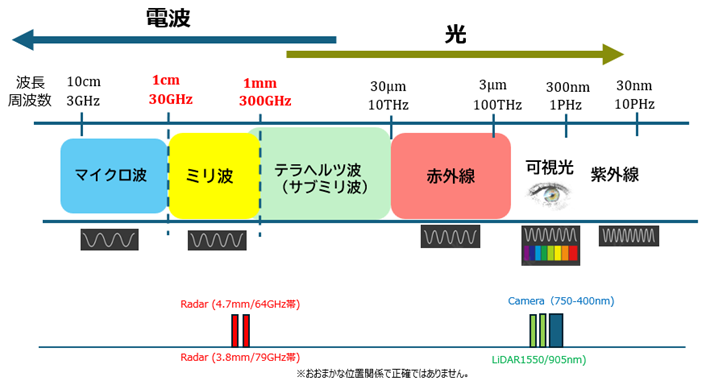

Characteristics of the conventional three pillars and differences in wavelength

-

Characteristics: Excellent ability to capture color and texture, and strengths in object recognition and classification.

-

Limitations: Performance is significantly reduced in foggy, dark, and backlit environments.

-

Wavelength background: Visible light has a very short wavelength and is easily affected by scattering by fine particles in the air (fog, smoke, raindrops), making it easy for visibility to be obstructed.

-

Features: It can generate highly accurate 3D point clouds, allowing you to grasp the shape of an object in detail.

-

Limitations: Performance is reduced in bad weather such as rain, snow, and fog, and costs are high.

-

Wavelength background: Infrared light has a longer wavelength than visible light, and although it has a certain degree of transparency, it is susceptible to absorption and scattering by moisture and particles.

-

Features: Strong in distance and speed detection, resistant to bad weather.

-

Limitations: The angular resolution was low, and the information obtained was at the "point" level, making it difficult to recognize as an image.

-

Wavelength background: Millimeter waves and microwaves are excellent for all-weather sensors because they easily penetrate particles such as fog, rain, and snow. However, their long wavelengths tend to result in low spatial resolution.

This is where Imaging Radar comes in.

Imaging Radar is a next-generation technology that leverages the strengths of conventional radar while increasing spatial resolution to enable imaging.

-

Radar weather resistance + speed estimation capability

It provides stable performance even in rainy or foggy environments, and can also estimate the speed of the target object. -

MIMO (Multiple Input Multiple Output) Array ANTENNAS and Wideband Technology

The MIMO configuration using multiple ANTENNAS and wideband signals significantly improves angular and elevation resolution. -

Export as point cloud

It generates a 3D point cloud that can grasp the shape of an object to some extent, and can be used for AI recognition and classification.

Complementary relationship of sensors in terms of wavelength

| Sensors | Wavelength band | Strengths | weakness |

|---|---|---|---|

| Camera | Visible light (400–750nm) | Color/Texture/Classification | Vulnerable to weather and dark places |

| LiDAR | Infrared (905nm/1550nm) | High precision 3D shape | Rain, Fog, and Cost |

| Radar | Millimeter/microwave (3.9–12.5 mm) | Distance, speed, weather resistance | Resolution/imaging difficulty |

| Imaging Radar | Weatherproof + Imaging | Resolution may be inferior to LiDAR |

As such, differences in wavelength determine the strengths and weaknesses of sensors, and imaging radar is attracting attention as a way to fill that gap. In order to improve the accuracy and reliability of environmental recognition, it is also important to combine these sensors in a complementary manner.

Why is it attracting attention now?

Radar technology itself has been used in a variety of fields for a long time, but in recent years, "imaging radar" has been attracting particular attention in the civilian sector. This is due to technological advances in semiconductors, ANTENNAS technology, and AI-based signal processing, as well as the growing need for new social needs such as autonomous driving and monitoring. Here, we will briefly review the reasons for this attention.

-

1. Evolution of semiconductor technology

The millimeter wave front end, A/D converter, and DSP/AI accelerator are highly integrated in CMOS, making it possible to realize a high-density MIMO architecture equipped with multiple transmitting and receiving elements at low cost and in a compact size.

-

2. Bandwidth and ANTENNAS array expansion

The expansion of the bandwidth from 60 GHz to 70 GHz has improved distance resolution, and the expansion of ANTENNAS array has improved angle and height resolution, making 4D imaging (distance, azimuth, elevation, and velocity) practical.

-

3. Evolution of Software and AI

In addition to FFT beamforming, CFAR (Constant False Alert Rate), and clustering (DBSCAN, etc.), there has been an increase in research and products that directly apply neural networks to radar cubes (Range x Doppler x Angle), enabling more accurate object recognition than conventional radar.

-

4. Use Case Maturity

• All-weather redundancy required for autonomous driving (rain, fog, and darkness)

• Detection of abandoned items in the vehicle interior

• Monitoring in nursing care facilities, non-contact safety monitoring in factories and infrastructure

• Non-contact safety monitoring of factories and infrastructure

• Drone navigation in poor visibility

In these applications, the advantages of imaging radar (radar that can generate images) become clear.

How are point clouds created?

Next, we'll look at point cloud generation, which is the core of Imaging Radar technology. In order for Imaging Radar to depict space in a "visible form," it must convert radio wave reflection information into physical coordinate data (point cloud). This is not just distance measurement; it is an advanced process that plots points in space. The process is explained below.

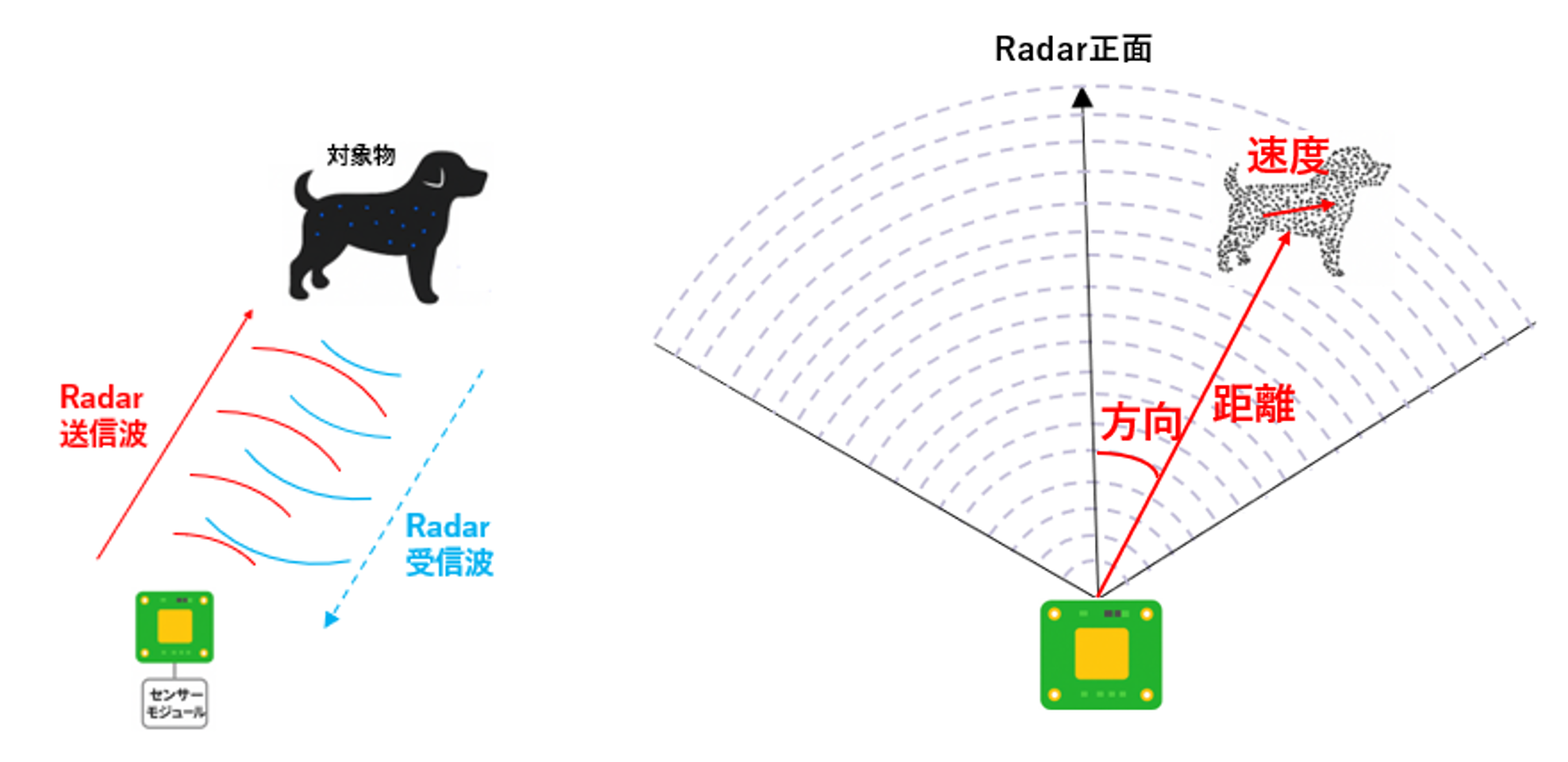

1. Transmitting radio waves and acquiring reflected signals

-

First, Imaging Radar transmits millimeter wave radio waves into space. When the radio waves hit an object, they are reflected and returned to the radar's receiving ANTENNAS. The information that can be obtained at this time is as follows:

• Range: Calculated from the time difference between sending and receiving

• Velocity: Calculated by measuring the Doppler shift of the received radio waves

• Direction (Angle): Calculated from the phase difference received by multiple ANTENNAS

• Reflection intensity (Intensive): Depends on the material and shape of the target

2. Spatial Decomposition with MIMO and Beamforming

-

The MIMO (Multiple Input Multiple Output) configuration plays an important role in imaging radar. By combining multiple transmit and receive ANTENNAS, it is possible to have multiple virtual viewpoints, improving spatial resolution.

Furthermore, by using beamforming technology, it is possible to concentrate radio waves in a specific direction and estimate the direction of arrival of reflected waves with high accuracy, which allows for a more precise understanding of the "location" of an object.

3. Generate point clouds from distance, direction, speed, and intensity

-

Based on the acquired distance and direction information, polar coordinates (distance and angle) are converted to Cartesian coordinates (X, Y, Z). This allows the data to be expressed as a point in space.

X = R × cos(θ) × cos(φ)

Y = R × sin(θ) × cos(φ)

Z = R × sin(φ)

where:

R: Distance

θ: horizontal angle

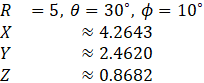

φ: Vertical angleFor example, if a reflection point is located at a distance of 5m, a horizontal angle of 30 degrees, and a vertical angle of 10 degrees, the following coordinate transformation is performed:

The large number of points obtained in this way are organized into a **point cloud**. Each point is assigned attributes such as reflection intensity, and these points are plotted in space, providing clues for estimating the material and contours of objects.

4. Point Cloud Visualization and Post-Processing

-

Point clouds can be noisy and difficult to recognize as meaningful shapes, so the following post-processing is performed:

• Noise removal: Removes points with low reflection intensity and isolated points

• Clustering: Grouping nearby points and recognizing them as objects

• Tracking: Tracks continuous point clouds over time and detects moving objects.

After these processes, the image is finally displayed in 3D in a form that humans can understand, or it is used for object recognition by AI.

What is the actual point cloud accuracy of Imaging Radar?

So far, we've briefly looked at how Imaging Radar generates a point cloud from radar signals and visualizes space, but how accurate is the point cloud that is actually obtained?

This is a point that many people are concerned about, so here we will introduce the differences and features of LiDAR and Imaging Radar, which are often compared, focusing on the point cloud accuracy, along with specific numerical values.

Actual point cloud accuracy of Imaging Radar

-

Distance resolution:

With high-performance imaging radar, the resolution is on the order of a few centimeters to less than 10 cm.

(LiDAR is about 2cm, so Radar is slightly inferior.) -

Angular resolution:

Horizontal: 1° to 2° (approximately 0.5° for high-density arrays)

Vertical: 2° to 5° (vertical is coarse) -

Point cloud density:

While LiDAR captures hundreds of thousands of points per second, Radar captures only a few thousand to tens of thousands of points per second.

→ Radar is characterized as being "rough but suitable for all weather conditions." -

Features:

- Resistant to rain, fog and snow

- Large difference in reflection depending on the material (weak on non-metallic materials)

- The point cloud is "sparse" and the contours of objects are rough, but their positions can be recognized.

Comparison with LiDAR (reference)

| Item | Imaging Radar (70GHz band) | LiDAR (Related Article #1) |

|---|---|---|

| Distance Accuracy | A few cm to 10 cm | Approximately 2 cm |

| Angular resolution | 1°~5° | 0.1° |

| point cloud density | Thousands to tens of thousands of items | Hundreds of thousands of points |

| Weather resistance | ◎ (Stable even in rain, fog and dark places) | △ (Performance decreases in rain and fog) |

Current situation and issues

In this column, we have explained the features of Imaging Radar, comparing it with Camera, LiDAR, and conventional Radar. Imaging Radar is by no means an all-purpose technology, and at present, many challenges and hurdles remain. Furthermore, in recent years, the trend toward "sensor fusion," which combines and utilizes the strengths of each sensor, has been accelerating.

Finally, I would like to summarize the current state of Imaging Radar and the issues that need to be resolved in the future.

[Current situation: technological progress]

-

1. Achieving high resolution

The MIMO configuration and wideband signals achieve higher spatial resolution than conventional radar.

The shape of the object can be understood to some extent as a 3D point cloud. -

2. All-weather performance

Stable detection capabilities even in bad weather conditions such as rain, fog, and snow.

It has a longer wavelength than visible light or infrared light and has excellent environmental resistance. -

3. Speed estimation ability

By utilizing the Doppler effect, the moving speed of an object can be estimated with high accuracy.

-

4. Collaboration with AI

By analyzing and classifying point cloud data using AI, the accuracy of object recognition is improved.

Advances in multimodal recognition through sensor fusion.

[Challenges: Technical and operational barriers]

-

1. Resolution Limits

Range resolution can be improved by widening the bandwidth, but angular resolution depends on ANTENNAS diameter.

In applications such as automotive, there are significant space constraints, which limits ANTENNAS size. -

2. Multipath and Ghosting

Reflections from walls, vehicles, etc. may cause false detections (ghosts).

Research is currently underway into AI-based clutter removal and multipath correction technologies. -

3. Interference Issues

In an environment where multiple radars are operating simultaneously, there is a possibility of mutual interference.

Countermeasures such as chirp randomization and frequency separation are underway. -

4. High computational load

4D imaging (distance, angle, height, and speed) requires a huge amount of calculations, such as FFT and DOA estimation.

The introduction of edge AI and dedicated DSP is essential. -

5. Cost and size challenges

The implementation of high-performance ANTENNAS and wideband circuits increases costs and housing size.

For applications such as in-vehicle and drone applications, there are strict restrictions on installation space and power consumption. -

6. Variation in recognition accuracy

Reflection characteristics vary depending on the material and shape of the object and the surrounding environment, resulting in variations in recognition accuracy.

AI-based correction and more extensive learning data are required. -

7. Lack of standardization and infrastructure development

Standardization of communication protocols and data formats for integrated operation with other sensors has not yet been established.

The development and evaluation environment is also being prepared.

Summary

Imaging radar is a technology that enhances perception redundancy and reliability while complementing the weaknesses of cameras and LiDAR by combining MIMO, wideband, and powerful signal processing with its inherent strengths of weather resistance and speed estimation. While challenges exist in design, calibration, interference, and computational complexity, 4D imaging and AI-based restoration and identification are steadily improving the density and meaning of point clouds. In the sensor fusion era, imaging radar is becoming an important pillar that supports not only "can be seen" but also "movement" and "ability to maintain stability even in adverse conditions." Despite challenges, advances in semiconductors, AI, and algorithms are expected to further improve accuracy and reduce costs in the future.