Introduction

Hello, this is a member of the Nexty Electronics Development Department.

I am in charge of technical support for GPU Advanced Test Drive (GAT), which allows you to try out various GPU servers at a low cost for a limited period of time with a high degree of freedom.

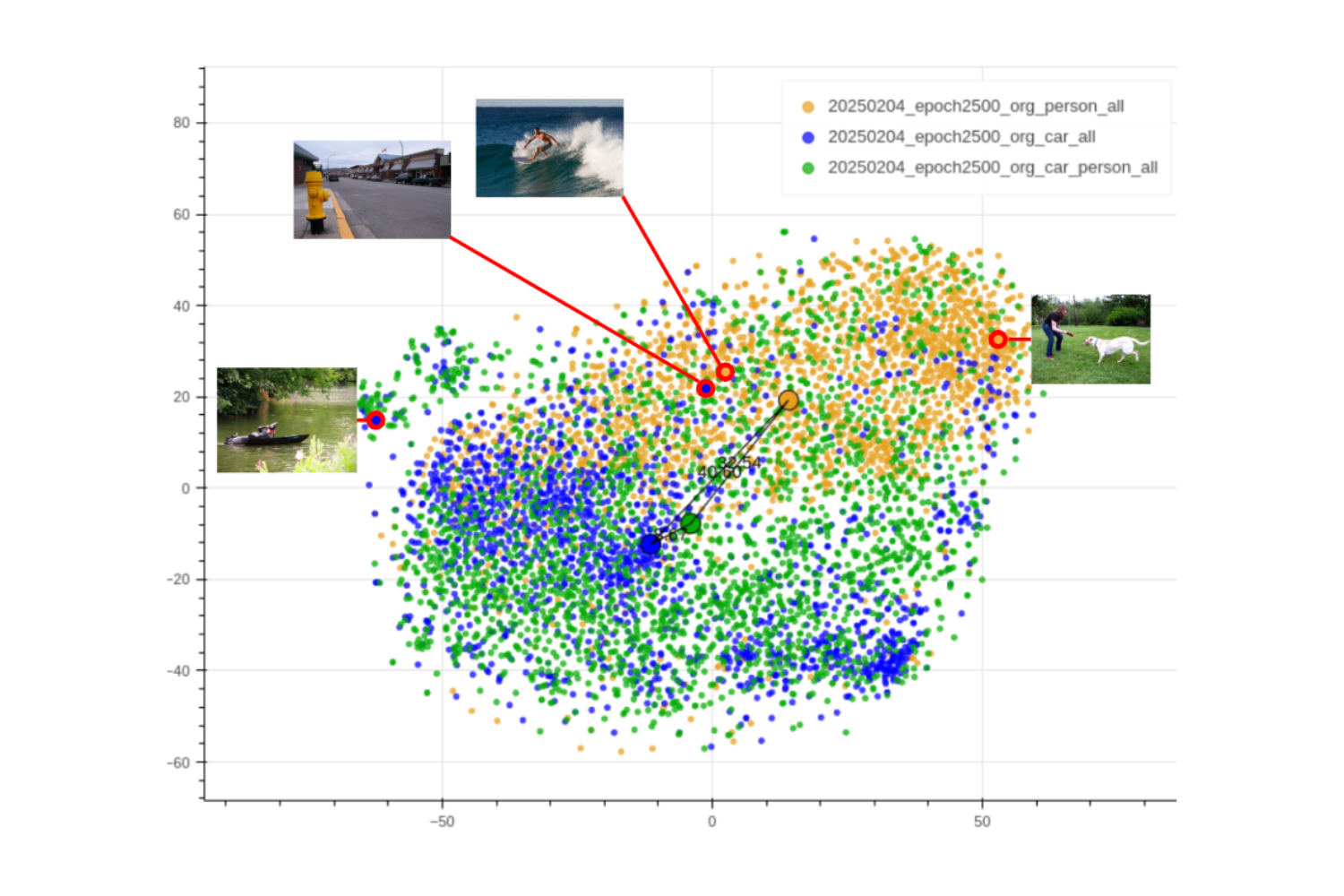

Recently, video generation using GPUs has become practical, and there is a growing trend to use generated videos as test data for exceptional cases that are normally difficult to capture, such as automatic robot control and autonomous driving.

One of these is a project called NVIDIA Cosmos, released by NVIDIA.

One of these models, Cosmos-Predict1, has been released as part of the AI Enterprise framework. (Our company, GAT, also offers a paid version of this service, so please feel free to take advantage of it.) Using this model, you can generate videos like the one below from text.

Cosmos-Predict1 on AI Enterprise is in a smart Docker format called "NIM," which allows you to build an optimal self-hosted execution environment on a DGX machine simply by pulling it. In this article, we will cover the steps to build this NGC format and the generation speed on the DGX-H100/DGX-A100.

Equipment used

The specifications of the DGX-H100/DGX-A100 used in this study are as follows. Both are DGX servers equipped with 8 GPUs. They can be used with GAT.

| Categories | DGX-H100 | DGX-A100 |

|---|---|---|

| GPU | NVIDIA H100 SXM 80GB x 8 | NVIDIA A100 SXM 40GB x 8 |

| CPU | Dual x86 | Dual AMD Rome 7742 |

| Memory | 2TB | 2TB |

| Storage OS | 2 x 1.92TB M.2 NVMe | 2 x 1.92TB M.2 NVMe |

| Storage Data | 30 TB (8 x 3.84 TB U.2 NVMe) | 30 TB (8 x 3.84 TB U.2 NVMe) |

| OS | DGX OS (Ubuntu-based) | DGX OS (Ubuntu-based) |

Setting up the environment (text2world)

This time, we will build text2world, which generates videos from text.

-

1. (If you are building it locally) Issue an NGC token for AI Enterprise in advance.

(Developer Program permissions are also acceptable here) -

2. Connect to the H100 via SSH (GAT uses SSH connection). When doing so, set the NVIDIA Cosmos server port to be forwarded.

(You will be able to send and receive data from the local computer to the NVIDIA Cosmos server.)

*The following commands are just examples, and addresses etc. are not correct.

ssh -L 18000:localhost:18000 username@h100.gat.hogehoge

- 3. On the H100 connected via SSH, enter the AI Enterprise token key issued in step 1.

NGC_KEYSet it as an environment variable.

h100> export NGC_KEY=.......- 4. (If the container image does not exist) Pull the docker image. The image is 14.9G.

h100> docker login nvcr.io

Username: $oauthtoken (←この通り入力)

Password: (←ここでもNGCトークンを入力)

Login Succeeded ←成功時はこの表示です

h100> docker pull nvcr.io/nim/nvidia/cosmos-predict1-7b-text2world:latest

- 5. On the H100, run the following docker startup script:

#!/bin/bash

#毎回打つのが面倒な場合、鍵設定もスクリプトに含めます

#export NGC_API_KEY=.....

#NIMのキャッシュを置く場所

#無い場合はフォルダを事前に作成し、ユーザー権限でアクセスできるようにしておきます

export LOCAL_NIM_CACHE=/home/username/.cache

mkdir -p "$LOCAL_NIM_CACHE"

#dockerの起動

docker run -it --rm \

--gpus all \

--ipc host \

-v "$LOCAL_NIM_CACHE:/opt/nim/.cache" \

-u $(id -u) \

-e NGC_API_KEY \

-p 18000:8000 \

nvcr.io/nim/nvidia/cosmos-predict1-7b-text2world:latest

The execution log will be displayed, so wait for the docker startup to complete. The first time, it will take some time as models etc. will be downloaded (12G/28G/9G etc.). If the following message is displayed, startup is complete.

INFO 2025-05-19 02:39:22.057] Serving endpoints:

0.0.0.0:8000/v1/health/live (GET)

0.0.0.0:8000/v1/health/ready (GET)

0.0.0.0:8000/v1/metrics (GET)

0.0.0.0:8000/v1/license (GET)

0.0.0.0:8000/v1/metadata (GET)

0.0.0.0:8000/v1/manifest (GET)

0.0.0.0:8000/v1/infer (POST)

INFO 2025-05-19 02:39:22.057] {'message': 'Starting HTTP Inference server', 'port': 8000, 'workers_count': 1, 'host': '0.0.0.0', 'log_level': 'info', 'SSL': 'disabled'}

If you get an error, it is often due to insufficient VRAM memory (it seems that to run this NIM version, a total of 100G or more / each GPU needs 48G or more).

- 6. Open another terminal on your local machine and run the following script. It will send settings such as the prompt, receive the generated data, convert the video portion of the received data into a video file, and save the upsampled prompt as text.

#!/bin/bash

video_no=0

curl -X 'POST' \

'http://localhost:18000/v1/infer' \

-H 'Accept: application/json' \

-H 'Content-Type: application/json' \

-d@- <<EOF > infer

{

"prompt": "The video is captured from a camera mounted on a car. The camera is facing forward. The footage shows a quiet American street corner on a sunny day. A small diner with a retro sign stands at one corner, while the other corners feature low-rise office buildings and wide sidewalks. A single pickup truck waits at the intersection, with its brake lights visible with no visible movement. Suddenly, the car collides with the back of the truck.",

"seed": 0

}

EOF

cat infer | jq -r ".b64_video" | base64 -d > video${video_no}.mp4

cat infer | jq -r ".upsampled_prompt" > upprompt${video_no}.txt

- 7. Try creating various videos by changing the prompt and seed value (even with the same prompt, changing the numbers will change the results), and repeating the request in step 4. You can change the name of the video file to be saved by changing the video_no part.

- 8. When you are finished, press Ctrl-C in the terminal of the script that started Docker to terminate the NVIDIA Cosmos server.

Examples of generated videos

Our company mainly produces in-vehicle videos.

As mentioned at the beginning of this article, the video below shows a scene that is difficult to capture with the Cosmos Predict 1. (Please feel free Inquiry us for more information on prompting.)

Time taken to generate

We have performed operational verification on the GAT server DGX-A100/DGX-H100.

The guidelines for creating one video are as follows:

| Model | First generation time after startup (minutes:seconds) |

|---|---|

| DGX-H100 | 01:18 |

| DGX-A100 | 02:36 |

It seems to take the longest time only after the first startup, so the time measured in that case was the longest (if you generate it repeatedly, it will be slightly shorter).

With a DGX machine that has 8 GPUs running in parallel, it doesn't take long to generate one video.

Video generation tends to be repetitive, requiring seed changes and prompt tweaks, but it can be generated with realistic latency.

Development Cosmos-Predict2 / Cosmos-Transfer1

We are also accepting requests for environment construction, know-how, video generation, and related consultations regarding Cosmos-Predict2, the next-generation version of Cosmos-Predict1 introduced here, and transfer1, which can create videos from existing datasets such as Lidar data.

An example is shown below.

Cosmos-Predict2

Below is an example generated using only text using Cosmos-Predict2 (an exceptional case where the car in front is spinning).

Below is an example where a single photo is used as the first FRAMES and the rest is generated as text (in the case where the car in front has broken down).

Cosmos-Transfer1

Below is an example of using Cosmos-Transfer1 with our in-house Lidar data (converted to nuScenes format) as input and only the weather changed.

Since it is the same Lidar input, the scene is the same, but you can see that the weather can be changed from normal to snow to rain at night to backlit early morning.

Conclusion

We explained how to run Cosmos-Predict1 on a DGX machine, the processing time, etc. We also posted some of our examples of Cosmos-Predict2 and Cosmos-Transfer1.

Although this time we focused on in-vehicle footage, we have confirmed that it is possible to create a variety of videos, such as control footage from a robot's perspective, or for monitoring parking lots and construction sites. Please feel free Inquiry with any questions you may have about whether you can create such videos.

Links to past GAT-related articles

*NVIDIA Cosmos is a trademark of NVIDIA Corporation