SLAM (Simultaneous Localization and Mapping) technology, which combines ROS2 (Robot Operating System 2) and LiDAR (Light Detection and Ranging), is attracting attention in a variety of fields, including self-driving robots, drones, AGVs (automated guided vehicles), and indoor navigation robots.

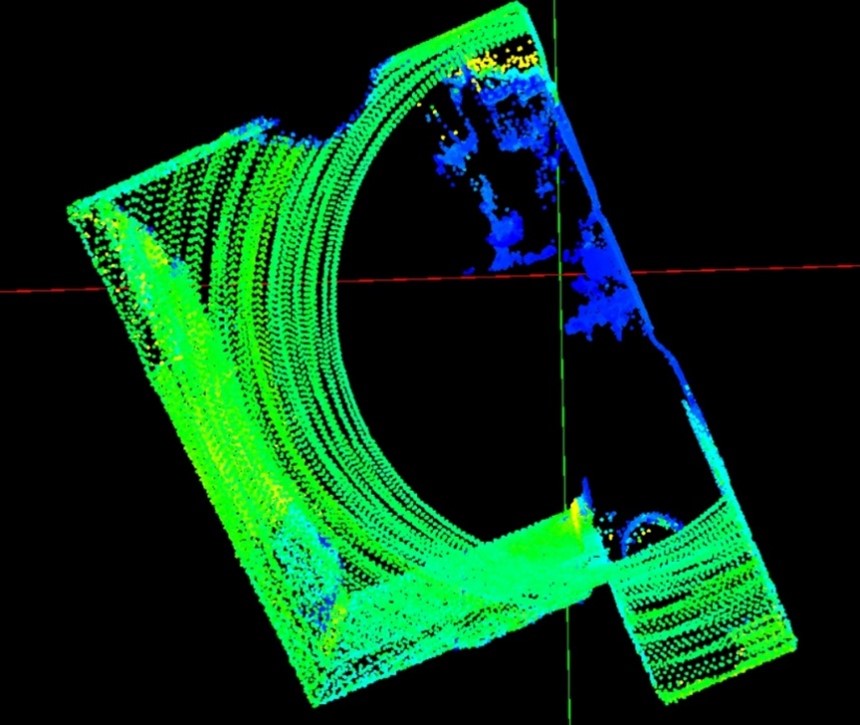

In recent years, 3D mapping technology using LiDAR has also been used at construction and civil engineering sites for topographical surveying, construction management, and monitoring of structural deformation, contributing to the promotion of construction DX (Digital Transformation).

On the other hand, there are technical challenges to implementing it in the field, such as the need for an external IMU and the uncertainty of the units of acceleration data, and ingenuity is required to ensure accuracy.

In this column, we will explain the key points for achieving highly accurate self-positioning and navigation without the need for an external IMU by utilizing the MID-360 3D-LiDAR with built-in IMU (Inertial Measurement Unit).

We will introduce an example of implementing acceleration data unit conversion (g → m/s²) using Python, which is expected to improve the accuracy of robot motion analysis, SLAM, and 3D mapping applications.

MID-360 Product Overview

The Livox Mid-360 is a next-generation 3D LiDAR sensor that achieves a wide 360° x 59° field of view and high-density point cloud output at up to 200,000 points per second. Equipped with an IMU (ICM-40609-D) in a compact housing (65 x 65 x 60 mm), it supports self-localization and point cloud correction in dynamic environments, significantly improving the accuracy and stability of SLAM and autonomous driving systems.

The built-in IMU is a 6-axis MEMS (Micro Electro Mechanical Systems) motion tracking device that integrates a 3-axis accelerometer and a 3-axis gyroscope, enabling accurate motion analysis and making it ideal for integration into indoor and outdoor mobile robots, AGVs (Autonomous Guided Vehicles), and 3D mapping.

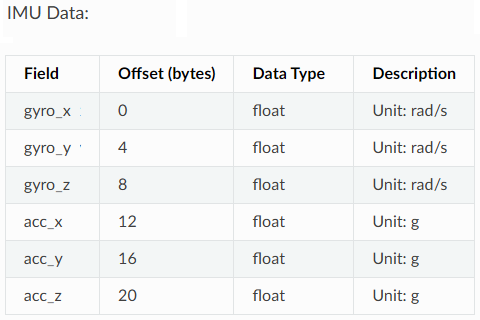

IMU Data Format

By using the ROS Driver2 provided by Livox: livox_ros_driver2, IMU data is published as a ROS2 topic (/livox/imu).

The IMU data format complies with the Livox_SDK2 protocol specifications, and acceleration data (acc_x, acc_y, acc_z) is output in units of "g" as shown in the table below.

IMU data format

On the other hand, ROS sensor_msgs/Imu messages assume the unit is "m/s²", so the data cannot be used as is.

Therefore, we created a dedicated node that converts the acceleration data output from livox_ros_driver2 into "m/s²".

By simply adding this node after the libox_ros_driver2, you can easily use the data from the MID-360's built-in IMU on ROS2.

IMU_converter

Below is an example implementation of a Python node for ROS2 that converts IMU values from "g" to "m/s²".

#!/usr/bin/env python3

import rclpy

from rclpy.node import Node

from sensor_msgs.msg import Imu

from geometry_msgs.msg import Vector3

from rclpy.qos import qos_profile_sensor_data

class ImuConverter(Node):

def __init__(self):

super().__init__('imu_converter')

self.subscriber = self.create_subscription(

Imu,

'/livox/imu', # 元のIMUデータのトピック

self.listener_callback,

qos_profile_sensor_data

)

self.publisher = self.create_publisher(

Imu,

'/imu_converted', # 変換後のIMUデータのトピック

qos_profile_sensor_data

)

def listener_callback(self, msg):

# 加速度をgからm/s²に変換

conversion_factor = 9.81

converted_msg = Imu()

# ヘッダー情報をコピー

converted_msg.header = msg.header

# ジャイロスコープデータはそのままコピー

converted_msg.angular_velocity = msg.angular_velocity

# 加速度データを変換

converted_msg.linear_acceleration = Vector3(

x=msg.linear_acceleration.x * conversion_factor,

y=msg.linear_acceleration.y * conversion_factor,

z=msg.linear_acceleration.z * conversion_factor

)

# 姿勢情報もそのままコピー(必要に応じて変更)

converted_msg.orientation = msg.orientation

# 変換したIMUデータをパブリッシュ

self.publisher.publish(converted_msg)

#self.get_logger().info('Published converted IMU data')

def main(args=None):

rclpy.init(args=args)

imu_converter = ImuConverter()

rclpy.spin(imu_converter)

imu_converter.destroy_node()

rclpy.shutdown()

if __name__ == '__main__':

main()

The converted data is published in the /imu_converted topic in units of "m/s²", so the acceleration data can be used directly in subsequent processing.

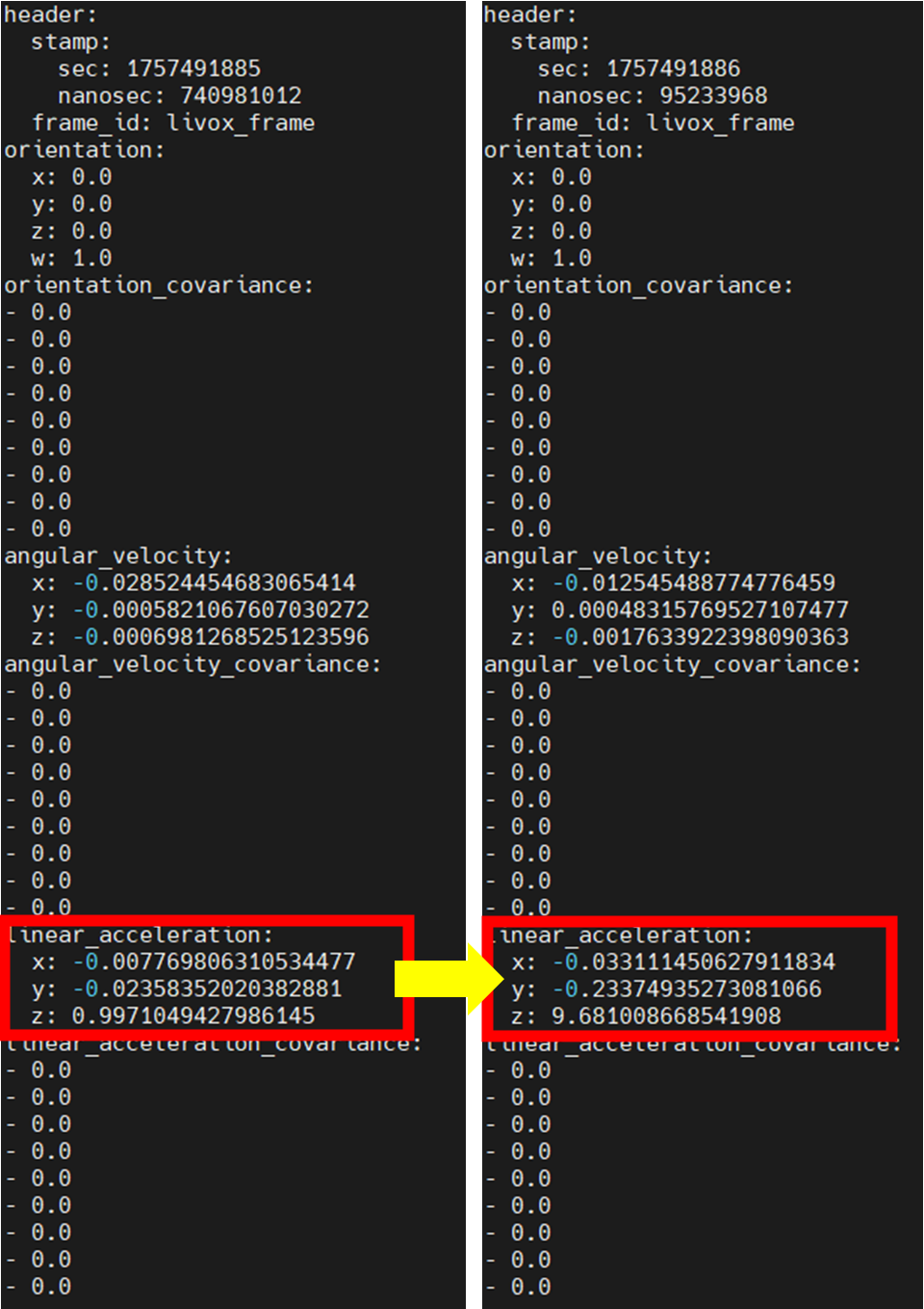

Below is the data before conversion: /livox/imu and after conversion: /imu_converted.

After the conversion, we can see that the linear_acceleration value is approximately 9.81 times larger.

/livox/imu data

/imu_converted data

This node has been confirmed to work in the ROS2 Humble *1 and Jazzy *2 environments.

We also confirmed that using it in conjunction with the LiDAR-based SLAM algorithm FAST-LIO significantly reduced the loss of self-positioning. This was due to improved SLAM stability achieved by pre-processing IMU data and improving the accuracy of coordinate transformation.

On the other hand, this can also be achieved by directly modifying the source code of libox_ros_driver2.

Please refer to this document for how to modify the source code of livox_ros_driver2.

*1 ROS2 version compatible with Ubuntu 22.04 LTS

*2 ROS2 version compatible with Ubuntu 24.04 LTS

Summary

By utilizing this node, MID-360 IMU data can be used accurately and efficiently in a ROS2 environment, enabling highly accurate self-localization and point cloud correction without the need for an external IMU. This will greatly contribute to improving the accuracy and stability of autonomous driving systems using 3D mapping and SLAM.

Related Info

Disclaimer

This system has been confirmed for demonstration purposes only, and we cannot take any responsibility for any malfunctions or other issues.

![Making your own CABLES for the Livox MID-360 [Practical Guide]](/uploads/media_files/livox_10_thumbnail-3954867486.jpg)